Do Better Workplaces Lead to Better Outcomes? NFL Data and Public Health Evaluation Lessons

We like clean stories in public health.

If a program is well-designed and well-implemented, it should lead to better outcomes. That assumption sits quietly behind how we fund, evaluate, and scale interventions. It feels logical.

And then reality gets messy.

As part of an exploration of “rankings” for a class I’m teaching up at SUNY, I turned to data from the National Football League Players Association. Each year, the NFLPA grades all 32 teams on their work environments: facilities, nutrition, medical care, travel, and leadership. It is, in many ways, a near-perfect evaluation dataset. It measures organizational quality in a structured, multi-dimensional way.

So I paired those scores with something brutally simple: how often each team actually won.

The Hypothesis: Better Systems Should Lead to Better Outcomes

At a high level, the logic mirrors public health evaluation:

- Better environments → better performance

- Better implementation → better outcomes

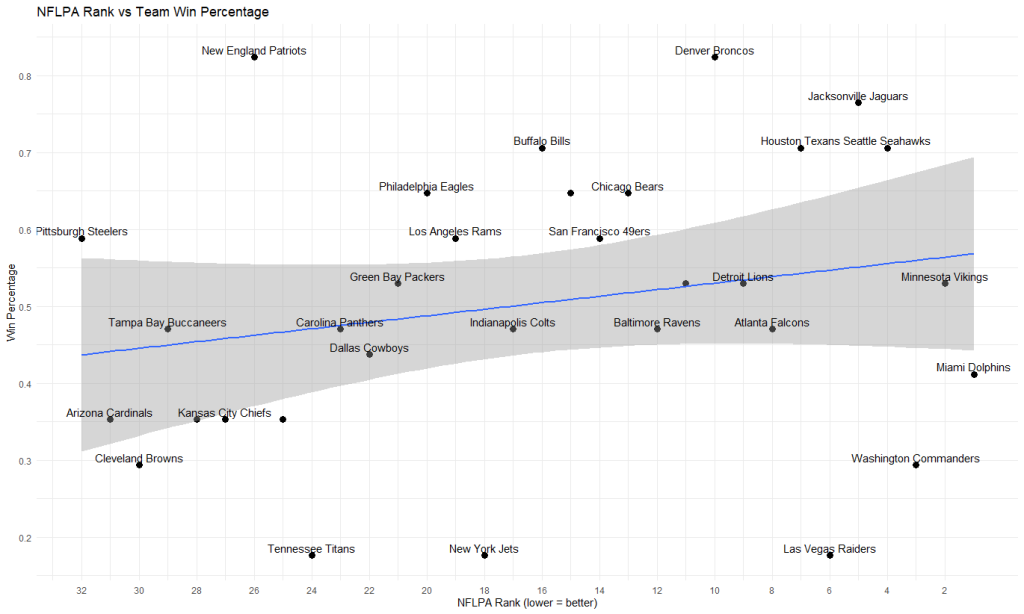

To test that idea, we matched NFLPA rankings to 2025-2026 team win percentages and examined the relationship.

The Result: A Weak Signal

There was a relationship, but it was small. The correlation between NFLPA rank and win percentage was about –0.20. Because a lower rank means a better workplace, those negative value points point in the expected direction. Better environments are associated with better outcomes.

But only slightly.

In practical terms, this means workplace quality explains very little of the variation in team performance.

When you look at the data visually, the line trends in the right direction, but the points are scattered everywhere. That scatter is the story.

The Outliers That Break the Model

The real insight comes when you stop looking at the trend and start looking at individual teams.

Miami, ranked #1 in workplace quality, finished with a 0.412 win percentage. Las Vegas, another top-tier organization, had one of the worst seasons in football, with a 0.176 record.

Meanwhile, Pittsburgh, ranked dead last (#32), still managed a 0.588 win percentage. New England, near the bottom at #26, posted a 0.824 win percentage, one of the best in the league.

If this were a public health program, this is where things start to feel uncomfortable. The evaluation says the system is strong. The implementation looks solid. And yet the outcomes are not following the script.

Looking Inside the Scores

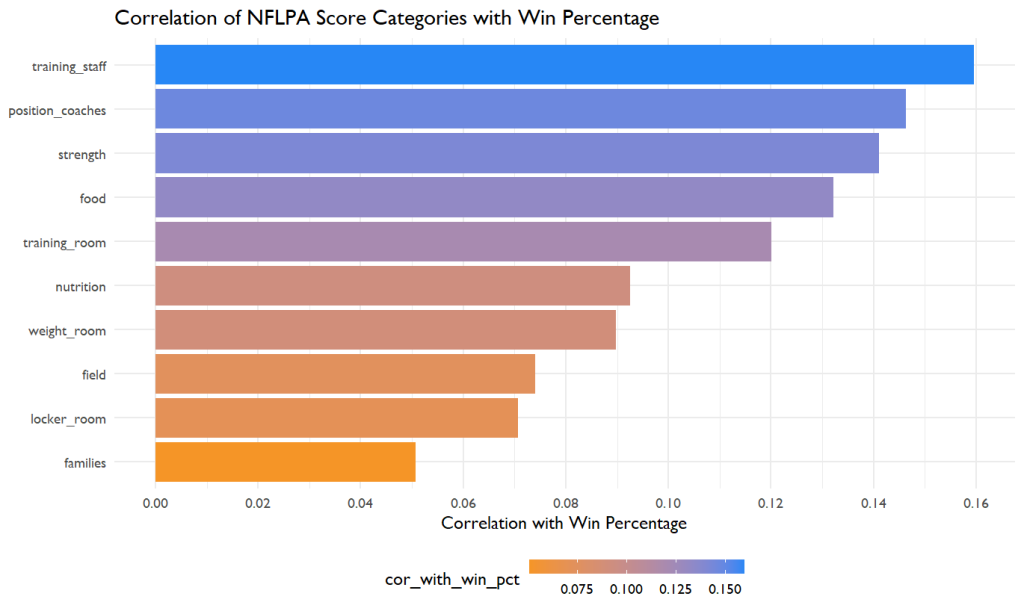

To go deeper, we broke the NFLPA data into individual components like training staff, nutrition, facilities, coaching support, and tested each one against performance. The results were remarkably consistent. Most correlations between these variables and win percentage were:

- small in magnitude

- often below |0.30|

- sometimes even negative

No single “best practice” variable stood out as a strong predictor of success. This is a familiar problem in public health. We measure things that are important, but not always the things that actually drive outcomes.

A Subtle Pattern: Preventing Failure Matters More Than Creating Success

There was, however, one pattern worth paying attention to. While high-quality environments did not guarantee strong performance, low-quality environments were more often associated with poor outcomes.

Teams near the bottom of the NFLPA rankings, like Cleveland (#30, 0.294), Cincinnati (#28, 0.353), and Arizona (#31, 0.353), consistently struggled.

This suggests something important: Good systems may not ensure success, but bad systems increase the likelihood of failure. In evaluation terms, we may be better at identifying risk than predicting success.

What This Reveals About Program Evaluation

This is where the analogy to public health becomes powerful. The NFLPA scores are essentially a measure of implementation quality. They tell us how well the system is functioning. But outcomes—wins—are shaped by many other forces: talent, injuries, strategy, and luck. Public health works the same way. We evaluate:

- program fidelity

- infrastructure

- participant experience

But outcomes are shaped by:

- social determinants

- policy environments

- behavioral factors

- historical context

Even a perfectly implemented program operates within a complex system it does not control.

Why Strong Evaluations Don’t Always Translate to Impact

The gap between evaluation and outcomes is not a failure. It is a reflection of reality.

First, outcomes are multi-causal. No single domain dominates. Second, evaluation often focuses on inputs and processes rather than underlying drivers. Third, timing matters. Organizational quality may influence long-term performance in ways that a single evaluation cycle cannot capture.

This is why frameworks from the Centers for Disease Control and Prevention emphasize context, use, and continuous learning. Evaluation is not just about measuring results. It is about understanding how systems behave.

Rethinking What “Success” Means

If we take this lesson seriously, it changes how we interpret evaluation in public health.

Instead of asking whether a program worked, we might ask whether it improved the conditions that make success possible. Instead of expecting strong, clean relationships between implementation and outcomes, we should expect weak and noisy ones.

And instead of focusing only on maximizing success, we should pay attention to reducing failure.

The Bottom Line

The NFL data tells a story that is both simple and uncomfortable.

Better workplaces are associated with better outcomes, but only weakly. They do not guarantee success. They can be overridden by other factors. And yet, they still matter.

They shape the conditions under which success becomes possible.

Final Thought

If the best workplace in football can still lose, and the worst can still win, what does that say about how we evaluate public health programs?

It does not mean evaluation is flawed. It means we need to interpret it with more humility, more context, and a deeper understanding of the systems we are trying to change.

Because in the end, evaluation does not tell us what will happen. It tells us what might happen. And why.